- Posts: 2

Vesicle simulation crashed with dry martini force

Vesicle simulation crashed with dry martini force

- lsloneil

-

Topic Author

Topic Author

- Offline

- Fresh Boarder

Less

More

8 years 3 months ago #5360

by lsloneil

Vesicle simulation crashed with dry martini force was created by lsloneil

Hi,

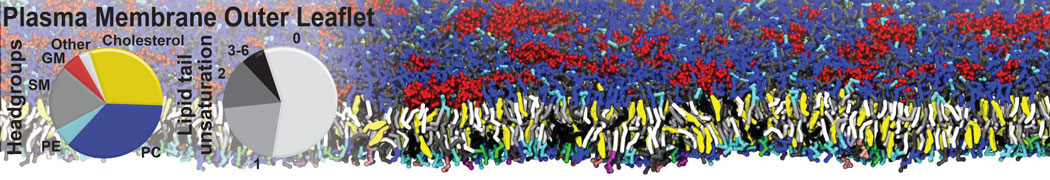

I'm trying to simulate a large lipid vesicle ( ~ 100 nm in diameter) with the dry martini force field. The system consists of about 1.4 million particles. I'm trying to equilibrate the system in NVT ensemble in a simulation box with length 120 nm, using the timestep of 10 fs. The simulation was running on 144 cores (6 nodes with 24 cores in each node). Below is my .mdp input file.

define = -DPOSRES -DPOSRES_FC=1000 -DBILAYER_LIPIDHEAD_FC=200

integrator = sd

tinit = 0.0

dt = 0.01

nsteps = 8000000

nstxout = 100000

nstvout = 10000

nstfout = 10000

nstlog = 10

nstenergy = 10000

nstxtcout = 1000

xtc_precision = 100

nstlist = 10

ns_type = grid

pbc = xyz

rlist = 1.4

epsilon_r = 15

coulombtype = Shift

rcoulomb = 1.2

vdw_type = Shift

rvdw_switch = 0.9

rvdw = 1.2

DispCorr = No

tc-grps = system

tau_t = 4.0

ref_t = 295

; Pressure coupling:

Pcoupl = no

; GENERATE VELOCITIES FOR STARTUP RUN:

;gen_vel = yes

;gen_temp = 295

;gen_seed = 1452274742

refcoord_scaling = all

cutoff-scheme = group

The simulation crashed with the following error message.

Step 6105820:

Atom 164932 moved more than the distance allowed by the domain decomposition (4.000000) in direction Z

distance out of cell 127480.656250

Old coordinates: 38.785 21.966 103.077

New coordinates: -477239.938 16192.882 127588.617

Old cell boundaries in direction Z: 60.580 107.937

New cell boundaries in direction Z: 60.632 107.958

Program mdrun_mpi, VERSION 5.0.4

Source code file: /scratch/build/git/chemistry-roll/BUILD/sdsc-gromacs-5.0.4/gromacs-5.0.4/src/gromacs/mdlib/domdec.c, line: 4390

Fatal error:

An atom moved too far between two domain decomposition steps

This usually means that your system is not well equilibrated

For more information and tips for troubleshooting, please check the GROMACS

website at www.gromacs.org/Documentation/Errors

Error on rank 58, will try to stop all ranks

Halting parallel program mdrun_mpi on CPU 58 out of 144

gcq#25: "This Puke Stinks Like Beer" (LIVE)

[cli_58]: aborting job:

application called MPI_Abort(MPI_COMM_WORLD, -1) - process 58

I think my simulation crashed possibly due to the large load imbalance generated by the domain decomposition. My system (lipid vesicle with implicit solvent) is highly inhomogeneous, therefore the domain decomposition algorithm will generate highly inhomogeneous domains with some domains empty and some full of particles. I tried to run the simulation with less CPUs (96 cores) and smaller timestep (1 fs) and there wasn't any problem for over 6 million steps.

However, I would still like to use more cores and large timestep to equilibrate my system. Is there any better way to control the load balance and domain decomposition such that I could equilibrate the system more efficiently? The dry martini paper said for such kind of vesicle simulations domain decomposition scheme should be chosen carefully. Is there a guidance for doing so?

Thanks very much.

I'm trying to simulate a large lipid vesicle ( ~ 100 nm in diameter) with the dry martini force field. The system consists of about 1.4 million particles. I'm trying to equilibrate the system in NVT ensemble in a simulation box with length 120 nm, using the timestep of 10 fs. The simulation was running on 144 cores (6 nodes with 24 cores in each node). Below is my .mdp input file.

define = -DPOSRES -DPOSRES_FC=1000 -DBILAYER_LIPIDHEAD_FC=200

integrator = sd

tinit = 0.0

dt = 0.01

nsteps = 8000000

nstxout = 100000

nstvout = 10000

nstfout = 10000

nstlog = 10

nstenergy = 10000

nstxtcout = 1000

xtc_precision = 100

nstlist = 10

ns_type = grid

pbc = xyz

rlist = 1.4

epsilon_r = 15

coulombtype = Shift

rcoulomb = 1.2

vdw_type = Shift

rvdw_switch = 0.9

rvdw = 1.2

DispCorr = No

tc-grps = system

tau_t = 4.0

ref_t = 295

; Pressure coupling:

Pcoupl = no

; GENERATE VELOCITIES FOR STARTUP RUN:

;gen_vel = yes

;gen_temp = 295

;gen_seed = 1452274742

refcoord_scaling = all

cutoff-scheme = group

The simulation crashed with the following error message.

Step 6105820:

Atom 164932 moved more than the distance allowed by the domain decomposition (4.000000) in direction Z

distance out of cell 127480.656250

Old coordinates: 38.785 21.966 103.077

New coordinates: -477239.938 16192.882 127588.617

Old cell boundaries in direction Z: 60.580 107.937

New cell boundaries in direction Z: 60.632 107.958

Program mdrun_mpi, VERSION 5.0.4

Source code file: /scratch/build/git/chemistry-roll/BUILD/sdsc-gromacs-5.0.4/gromacs-5.0.4/src/gromacs/mdlib/domdec.c, line: 4390

Fatal error:

An atom moved too far between two domain decomposition steps

This usually means that your system is not well equilibrated

For more information and tips for troubleshooting, please check the GROMACS

website at www.gromacs.org/Documentation/Errors

Error on rank 58, will try to stop all ranks

Halting parallel program mdrun_mpi on CPU 58 out of 144

gcq#25: "This Puke Stinks Like Beer" (LIVE)

[cli_58]: aborting job:

application called MPI_Abort(MPI_COMM_WORLD, -1) - process 58

I think my simulation crashed possibly due to the large load imbalance generated by the domain decomposition. My system (lipid vesicle with implicit solvent) is highly inhomogeneous, therefore the domain decomposition algorithm will generate highly inhomogeneous domains with some domains empty and some full of particles. I tried to run the simulation with less CPUs (96 cores) and smaller timestep (1 fs) and there wasn't any problem for over 6 million steps.

However, I would still like to use more cores and large timestep to equilibrate my system. Is there any better way to control the load balance and domain decomposition such that I could equilibrate the system more efficiently? The dry martini paper said for such kind of vesicle simulations domain decomposition scheme should be chosen carefully. Is there a guidance for doing so?

Thanks very much.

Please Log in or Create an account to join the conversation.

Time to create page: 0.092 seconds